NVIDIA Donates GPU DRA Driver to the Kubernetes Community

Original: Advancing Open Source AI, NVIDIA Donates Dynamic Resource Allocation Driver for GPUs to Kubernetes Community View original →

NVIDIA used KubeCon Europe on March 24, 2026 to make a strategic infrastructure move: it is donating the NVIDIA Dynamic Resource Allocation (DRA) Driver for GPUs to the Cloud Native Computing Foundation. The practical meaning is that a key piece of GPU orchestration software is moving from vendor-governed control toward community ownership within the Kubernetes ecosystem.

That matters because Kubernetes has become the default control plane for many enterprise AI workloads. As more model training and inference systems move into containerized environments, GPU allocation is no longer just a hardware problem. It is a scheduling, isolation, and resource-sharing problem at cluster scale. NVIDIA is positioning the DRA driver as a standard way to make that layer more transparent and more programmable.

What the driver is meant to improve

- Smarter GPU sharing, including support for NVIDIA Multi-Process Service and Multi-Instance GPU technologies.

- Support for multi-node interconnect setups such as NVIDIA Multi-Node NVlink, which matters for very large AI training systems.

- Dynamic reconfiguration of hardware allocations as workload needs change.

- Fine-grained resource requests so users can ask for specific compute, memory, or interconnect arrangements.

NVIDIA paired the donation with a broader message about open AI infrastructure. The company said it worked with the CNCF Confidential Containers community to add GPU support to Kata Containers, extending confidential computing techniques to GPU-accelerated workloads. It also said the KAI Scheduler has entered the CNCF Sandbox stage and that Grove, a Kubernetes API for orchestrating AI workloads on GPU clusters, is being integrated with the llm-d inference stack.

The partner list around the project is also notable. NVIDIA said AWS, Broadcom, Canonical, Google Cloud, Microsoft, Nutanix, Red Hat, and SUSE are all involved in pushing these features forward. That does not make Kubernetes-based AI infrastructure simple overnight, but it does increase the odds that GPU orchestration patterns become more standardized across vendors instead of staying fragmented inside proprietary tooling.

For AI platform teams, the announcement is less about one driver and more about governance. Moving a core GPU scheduling component into a vendor-neutral foundation can make it easier for operators, researchers, and software vendors to build on a shared interface. In a market where AI clusters are growing rapidly and infrastructure complexity keeps rising, that kind of standardization can be as important as raw silicon speed. Source: NVIDIA Blog.

Related Articles

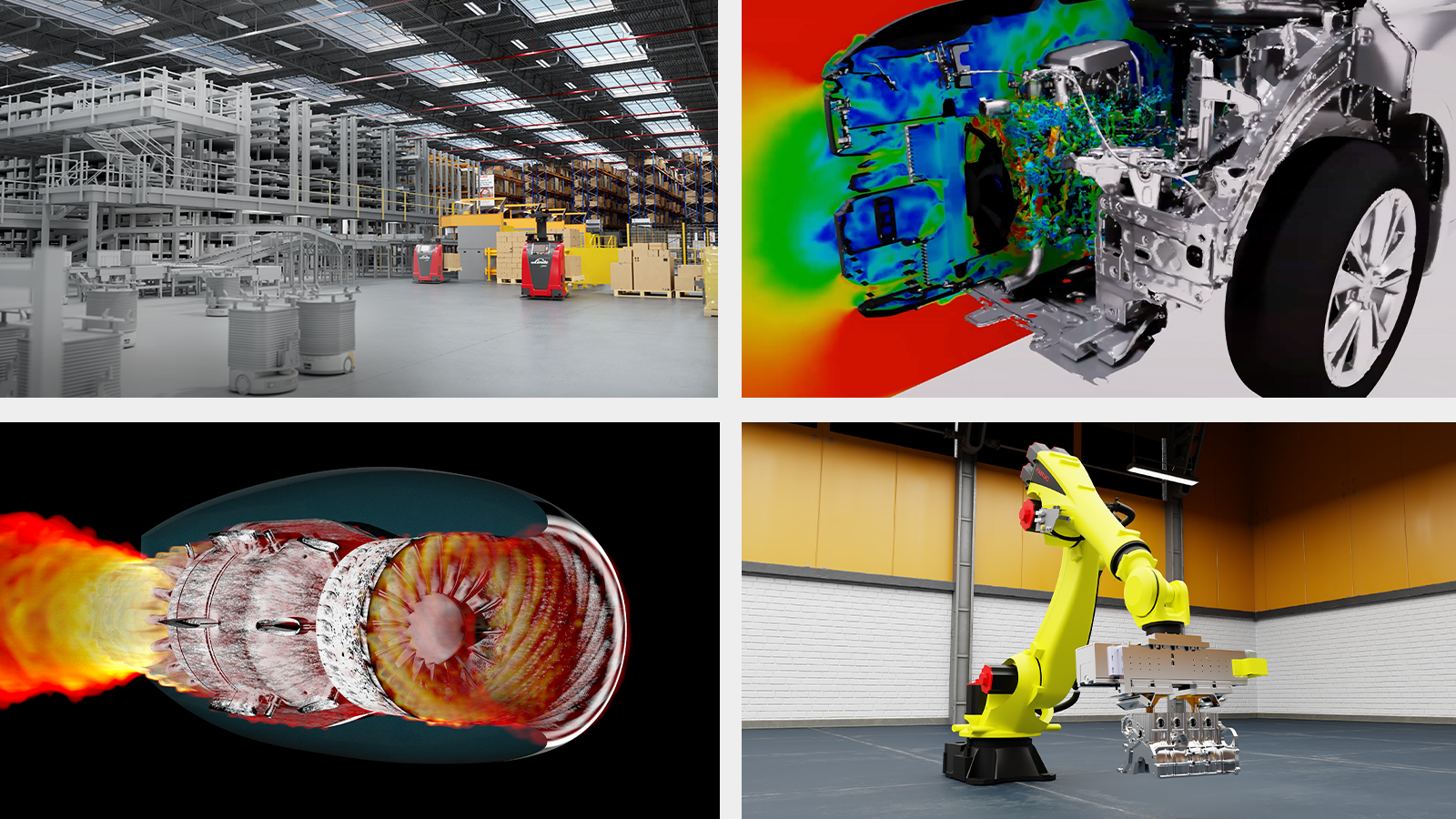

NVIDIA said on March 16, 2026 that Cadence, Dassault Systèmes, PTC, Siemens and Synopsys are bringing NVIDIA-powered AI agents and GPU-accelerated software into industrial workflows. The announcement spans chip design, automotive simulation, digital twins and manufacturing infrastructure across AWS, Google Cloud, Microsoft Azure, OCI and major OEM partners.

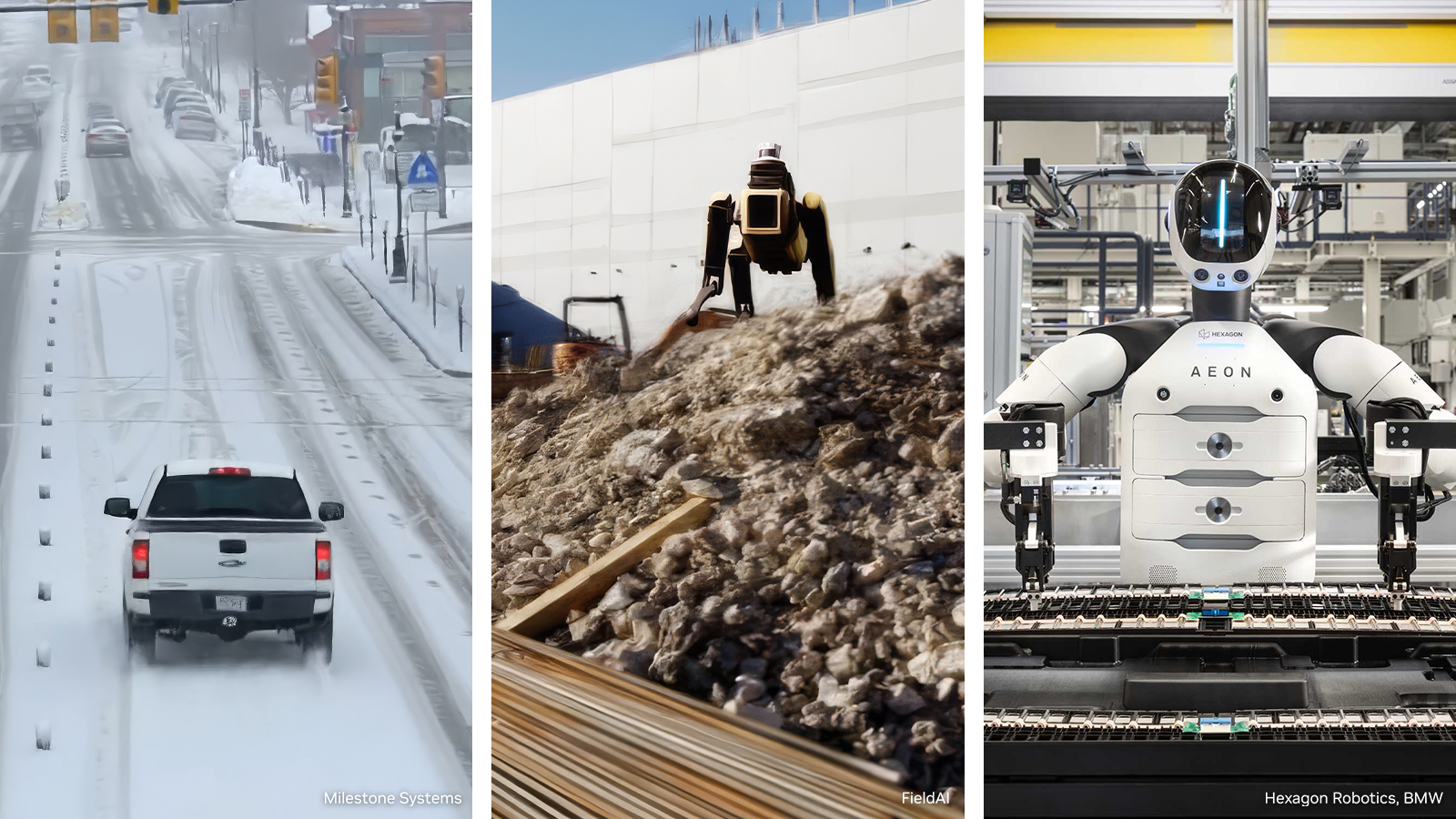

NVIDIA on March 16, 2026 introduced an open reference architecture for generating, augmenting and evaluating training data for robotics, vision AI agents and autonomous vehicles. Microsoft Azure and Nebius are integrating the blueprint, and NVIDIA said the package is expected to land on GitHub in April.

NVIDIA announced SOL-ExecBench on March 20, 2026, a benchmark for real-world GPU kernels that scores optimized CUDA and PyTorch code against Speed-of-Light hardware bounds on NVIDIA B200 systems. The release packages 235 kernel optimization problems drawn from 124 AI models across BF16, FP8, and NVFP4 workloads.

Comments (0)

No comments yet. Be the first to comment!