MacMind made HN see transformers with the hood open

Original: Show HN: MacMind – A transformer neural network in HyperCard on a 1989 Macintosh View original →

MacMind caught HN’s attention because it makes a transformer feel small enough to inspect. The project implements a complete 1,216-parameter, single-layer, single-head transformer in HyperTalk, the scripting language behind Apple’s HyperCard. The author says it was trained on a Macintosh SE/30, and every part of the model can be read inside HyperCard’s script editor.

The task is deliberately narrow: learn the bit-reversal permutation used at the start of the Fast Fourier Transform. MacMind is not told the formula. It receives random examples and learns the positional pattern through embeddings, positional encoding, scaled dot-product self-attention, cross-entropy loss, backpropagation and stochastic gradient descent. The repository also includes a pre-trained stack, a blank stack for training, and a Python/NumPy reference implementation to validate the math.

The community energy came from the contrast. Most AI conversation is about bigger clusters, larger context windows and more expensive inference. MacMind goes in the other direction. It says the core training loop is not magic, even if modern scale is enormous. One HN thread theme was that old hardware forces the concepts into view: when there are only 1,216 parameters, attention maps and weight updates stop being abstractions hidden behind infrastructure.

That does not make MacMind a tiny LLM. It is a teaching demo for a structured permutation problem, not a language system. Its value is that it gives readers a working transformer where the parts are close enough to touch. For people who learned programming through HyperCard, there is also a nostalgic twist: a 1987 scripting environment can still host the basic machinery behind today’s model boom.

The broader lesson is useful for AI literacy. If a model can be opened, modified, saved, reopened and inspected on a vintage Mac, then “attention” becomes less of a branding word and more of a set of operations. That is why HN treated the project as more than a retro stunt.

Sources: HN discussion and MacMind repository.

Related Articles

Stanford's public CS25 course is again operating as an open lecture stream for Transformer research, with Zoom access, recordings, and a community layer that extends beyond campus.

A recent Show HN post highlighted GuppyLM, a tiny education-first language model trained on 60K synthetic conversations with a deliberately simple transformer stack. The project stands out because readers can inspect and run the whole pipeline in Colab or directly in the browser.

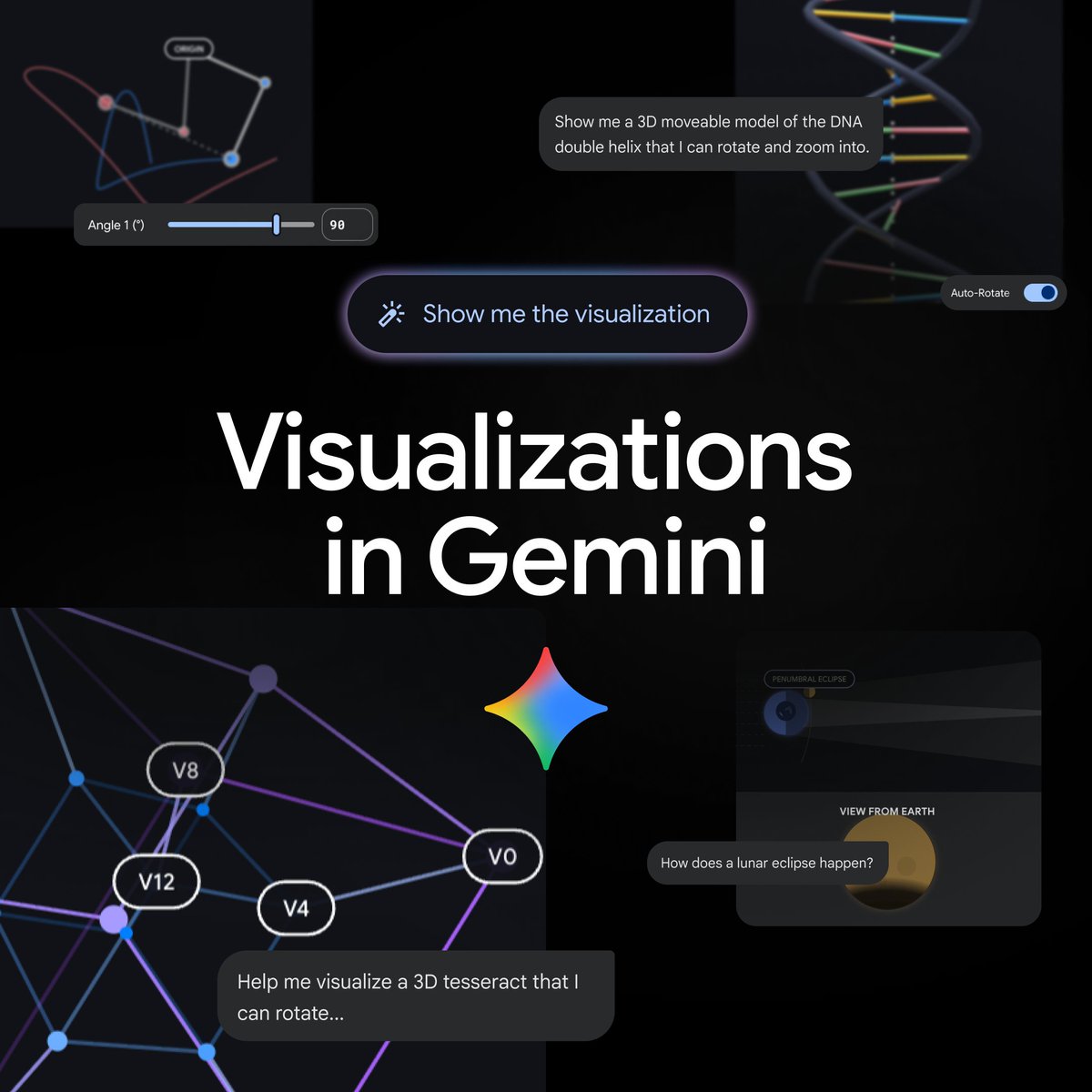

On April 9, 2026, Gemini said it can now generate interactive visualizations directly in chat. Google’s product page says the rollout adds functional simulations, adjustable parameters and 3D exploration to the Gemini app for global users on the Pro model.

Comments (0)

No comments yet. Be the first to comment!