ABB and NVIDIA Bring Omniverse-Based Physical AI Simulation to Industrial Robotics

Original: ABB Robotics Taps NVIDIA Omniverse to Deliver Industrial‑Grade Physical AI at Scale View original →

NVIDIA and ABB Robotics announced on Mar 9, 2026 that ABB is integrating NVIDIA Omniverse libraries directly into RobotStudio, its programming and simulation suite for industrial robots. The companies say the resulting product, RobotStudio HyperReality, closes the sim-to-real gap with 99% accuracy and will be available in the second half of 2026.

According to NVIDIA, the partnership is designed to bring industrial-grade physical AI to factory automation by letting manufacturers design, test and validate robot cells in physically accurate simulation before deployment. ABB says the new workflow can cut engineering time, reduce deployment costs by up to 40% and accelerate time to market by as much as 50%. Early pilots include Foxconn and Workr.

Why the announcement matters

- RobotStudio is already used by more than 60,000 robotics engineers worldwide, giving the Omniverse integration a large installed base from day one.

- The virtual controller runs the same firmware as the physical robot, which NVIDIA says enables 99% correlation between simulation and real-world behavior.

- Synthetic images generated in Omniverse can feed AI training pipelines, which links simulation directly to computer-vision model development.

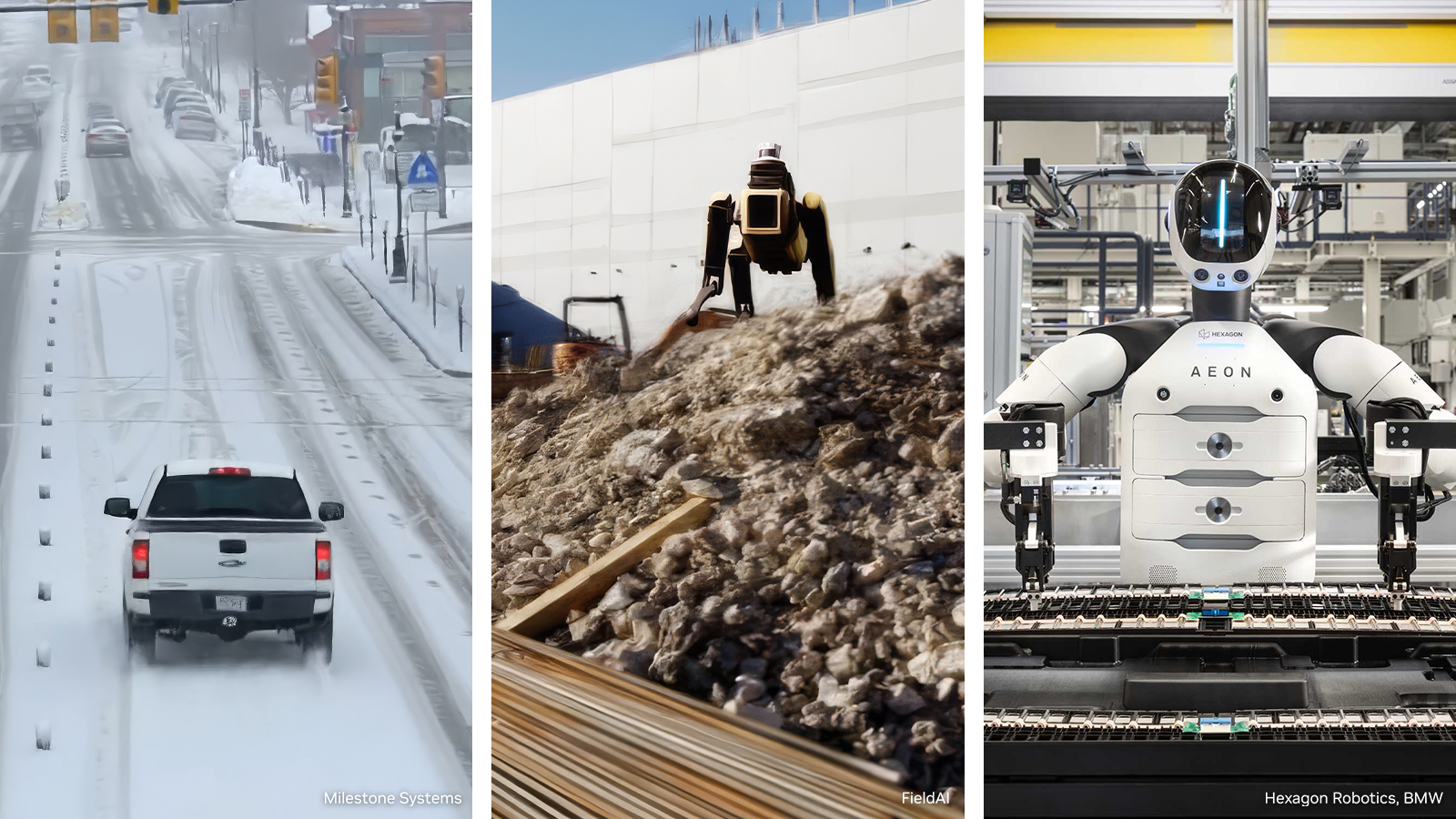

This is a meaningful industrial AI story because it moves physical AI from demo environments into a mainstream automation toolchain. The main value is not a humanoid showcase; it is the ability to validate cell layout, sensing, kinematics and AI behavior before hardware rollout. That matters for factories because commissioning delays and integration errors are expensive, and every percentage point of improvement in validation can translate into faster scale-up.

For the broader AI market, the announcement is another sign that Omniverse is becoming a control layer for synthetic data, simulation and robot deployment rather than only a visualization platform. If ABB delivers the claimed cost and speed benefits at scale, the partnership could make physically grounded AI workflows more standard across manufacturing, logistics and other robot-heavy industries.

Related Articles

NVIDIA on March 16, 2026 introduced an open reference architecture for generating, augmenting and evaluating training data for robotics, vision AI agents and autonomous vehicles. Microsoft Azure and Nebius are integrating the blueprint, and NVIDIA said the package is expected to land on GitHub in April.

Why it matters: NVIDIA is aiming generative video research at simulation-ready 3D environments rather than short clips. The tweet says Lyra 2.0 maintains per-frame 3D geometry and uses self-augmented training, while the project page shows outputs as Gaussian splats and meshes that can be exported to Isaac Sim.

This is less about one more cloud partnership and more about the infrastructure shape of the next agent wave. NVIDIA and Google Cloud say A5X Rubin systems can scale to 80,000 GPUs per site and 960,000 across multisite clusters, while cutting inference cost per token and boosting token throughput per megawatt by up to 10x versus the prior generation.

Comments (0)

No comments yet. Be the first to comment!