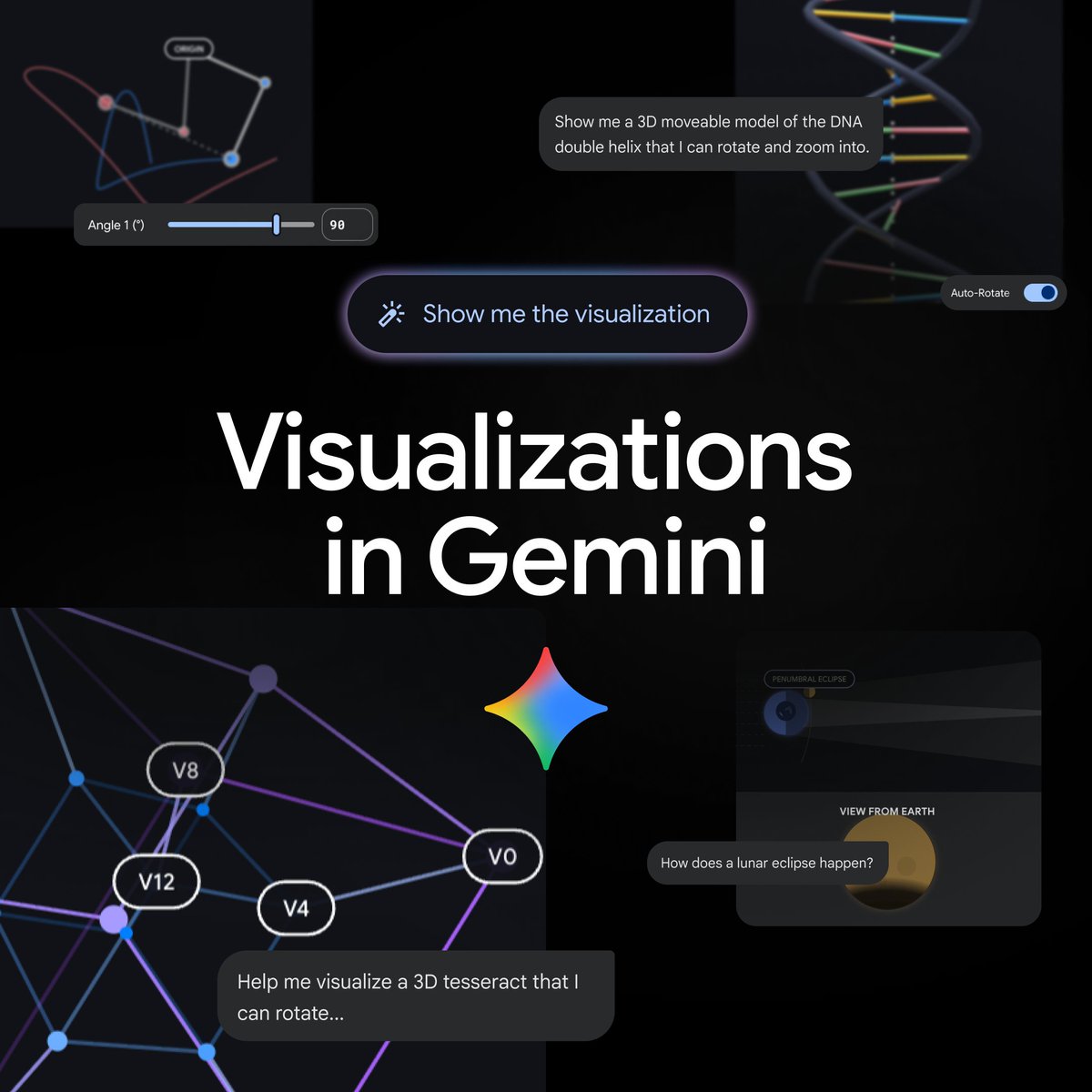

Gemini turns prompts into interactive visualizations and 3D models inside chat

Original: Gemini can now transform your questions and complex concepts into customizable interactive visualizations directly in your chat. Adjust variables, rotate 3D models, and explore data for a more immersive way to learn and explore in Gemini. View original →

What Gemini added

On April 9, 2026, Gemini said on X that it can now turn questions and complex topics into customizable interactive visualizations directly in chat. Google’s accompanying product page says this goes beyond text explanations and static diagrams: Gemini can now generate functional simulations that users can manipulate instead of just read about.

Google’s example is orbital motion. Rather than looking at a fixed illustration, a user can adjust sliders or enter exact values for variables such as gravity strength and initial velocity to see how those changes affect whether an orbit remains stable. Google also says users can rotate a molecule or explore other complex systems from a single prompt. To access the feature, Google recommends choosing the Pro model in the Gemini app and asking Gemini to “show me” or “help me visualize” a concept.

Why it matters

This changes the shape of the Gemini app in an important way. Most assistants still explain complicated ideas primarily through prose, tables or a static image. Interactive visualizations turn the answer into something the user can probe. That is especially useful for science, engineering, finance and education use cases where insight often comes from changing one variable at a time and watching the system respond.

Google says the feature is rolling out globally to all Gemini app users, although it is not yet available for Education and Workspace accounts. An inference from the launch is that Google is pushing Gemini toward a lightweight simulation layer as well as a reasoning layer. If that works, the product becomes more than a text-first chatbot: it becomes a place where users can ask a question, inspect a model, tweak assumptions and learn interactively without switching tools.

Sources: Gemini X post · Google product post

Related Articles

On April 8, 2026, Gemini introduced notebooks as a new project layer for grouping past chats, files and instructions. Google says notebooks sync with NotebookLM and are rolling out first on the web for Google AI Ultra, Pro and Plus subscribers.

Google introduced notebooks in Gemini on April 8, 2026, adding a shared workspace that syncs chats and source files with NotebookLM. The initial rollout starts on the web for Google AI Ultra, Pro, and Plus subscribers, with mobile, more European countries, and free users to follow.

Google introduced Project Spend Caps, revamped Usage Tiers, and new billing dashboards for Gemini API developers in AI Studio. The update is aimed at making cost control and scaling behavior more predictable for teams moving into paid usage.

Comments (0)

No comments yet. Be the first to comment!