NVIDIA rallies robotics leaders around production-scale physical AI

Original: NVIDIA and Global Robotics Leaders Take Physical AI to the Real World View original →

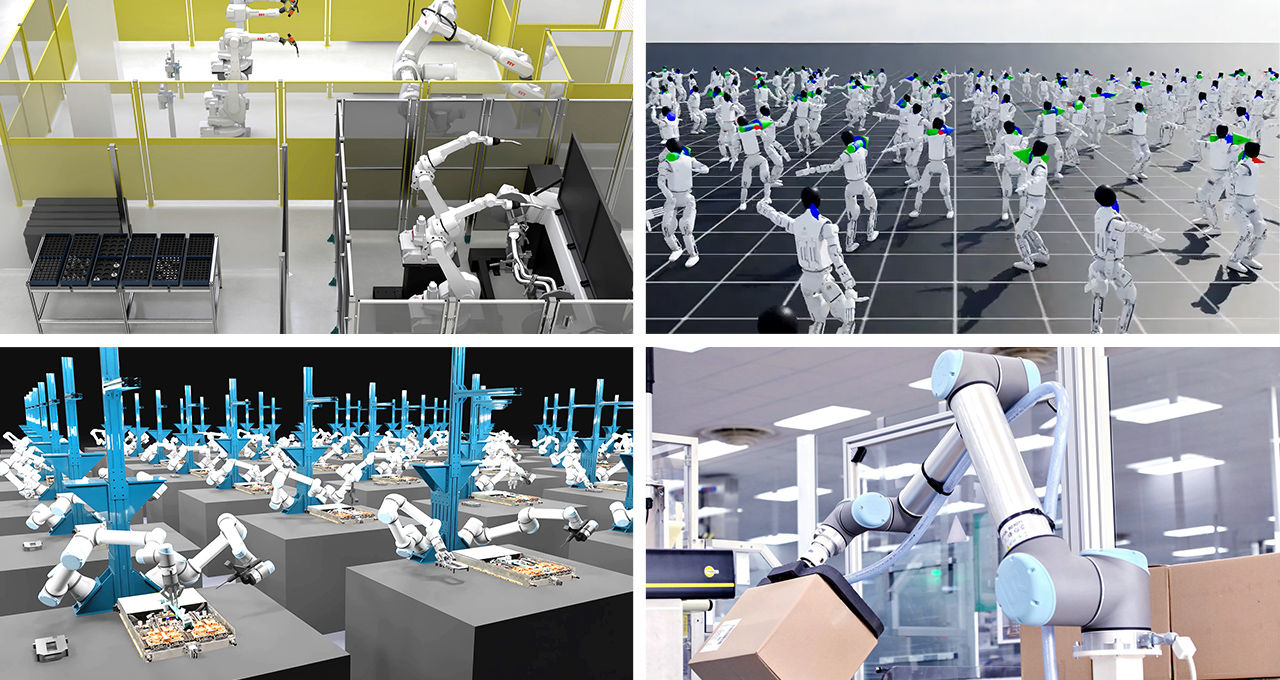

NVIDIA used GTC on March 16, 2026 to frame physical AI as a production problem rather than a research demo. The company said robot brain developers, industrial automation vendors, humanoid startups and healthcare robotics companies are now building on one connected stack for synthetic data generation, simulation, training and deployment.

That stack centers on NVIDIA Cosmos world models, NVIDIA Isaac simulation frameworks and NVIDIA Isaac GR00T robot foundation models. According to the release, ABB Robotics, FANUC, YASKAWA and KUKA are integrating Omniverse libraries and Isaac frameworks into virtual commissioning workflows so they can validate robot cells and factory lines in digital twins before touching live equipment. NVIDIA also said Jetson modules are being used to run real-time AI inference at the edge once those systems move into production.

The humanoid section was just as important. NVIDIA said 1X, AGIBOT, Agility, Figure, Hexagon Robotics, Humanoid and NEURA Robotics are using Cosmos, Isaac Sim and Isaac Lab to accelerate development and validation. Isaac Lab 3.0 is now in early access, built on Newton physics engine 1.0 and PhysX, with the goal of speeding large-scale robot learning on DGX-class infrastructure. GR00T N1.7 is also in commercial early access, while Jensen Huang previewed GR00T N2 and said it helps robots succeed at new tasks and in new environments more than twice as often as leading vision language action models.

The announcement broadened beyond humanoids into factories, logistics and healthcare. NVIDIA said CMR Surgical, Johnson & Johnson MedTech and Medtronic are applying the stack in medical robotics, while Skild AI, Foxconn, KION, Microsoft Azure, Nebius, CoreWeave and Alibaba Cloud are tying the platform into industrial workflows. NVIDIA also highlighted an integration with Hugging Face's LeRobot framework, aimed at connecting its robotics stack to a wider open source developer base.

The larger message is that the competitive bottleneck in robotics is shifting from isolated hardware demos to integrated toolchains. Vendors now need world models, realistic simulation, scalable training infrastructure and edge deployment paths at the same time. NVIDIA is arguing that whoever controls that full loop will shape the next phase of embodied AI.

Why it matters

- It bundles data generation, simulation and deployment tools into one robotics platform.

- It connects humanoid development to factory, warehouse and surgical use cases.

- It shows that 2026 competition in robotics will depend on software infrastructure as much as robot hardware.

Related Articles

NVIDIA’s open humanoid reference design combines Unitree H2 Plus hardware, Sharpa five-finger hands, and Jetson AGX Thor T5000 compute. The 75-DoF system is aimed at making humanoid research more comparable across labs.

NVIDIA said on March 20, 2026 that its Cosmos world foundation models have advanced again with Transfer 2.5, Predict 2.5, and Reason 2. The linked NVIDIA Technical Blog frames the update around higher-quality synthetic data, stronger long-tail scenario generation, and richer reasoning for robots and autonomous vehicles.

NVIDIA on March 16, 2026 introduced its Physical AI Data Factory Blueprint, an open reference architecture for generating, augmenting, and evaluating training data for robotics, vision AI agents, and autonomous vehicles. The company says the stack combines Cosmos models, coding agents, and cloud infrastructure from partners such as Microsoft Azure and Nebius to lower the cost and time of physical AI training at scale.

Comments (0)

No comments yet. Be the first to comment!