NVIDIA launches a Physical AI Data Factory Blueprint for robotics and autonomous systems

Original: NVIDIA Announces Open Physical AI Data Factory Blueprint to Accelerate Robotics, Vision AI Agents and Autonomous Vehicle Development View original →

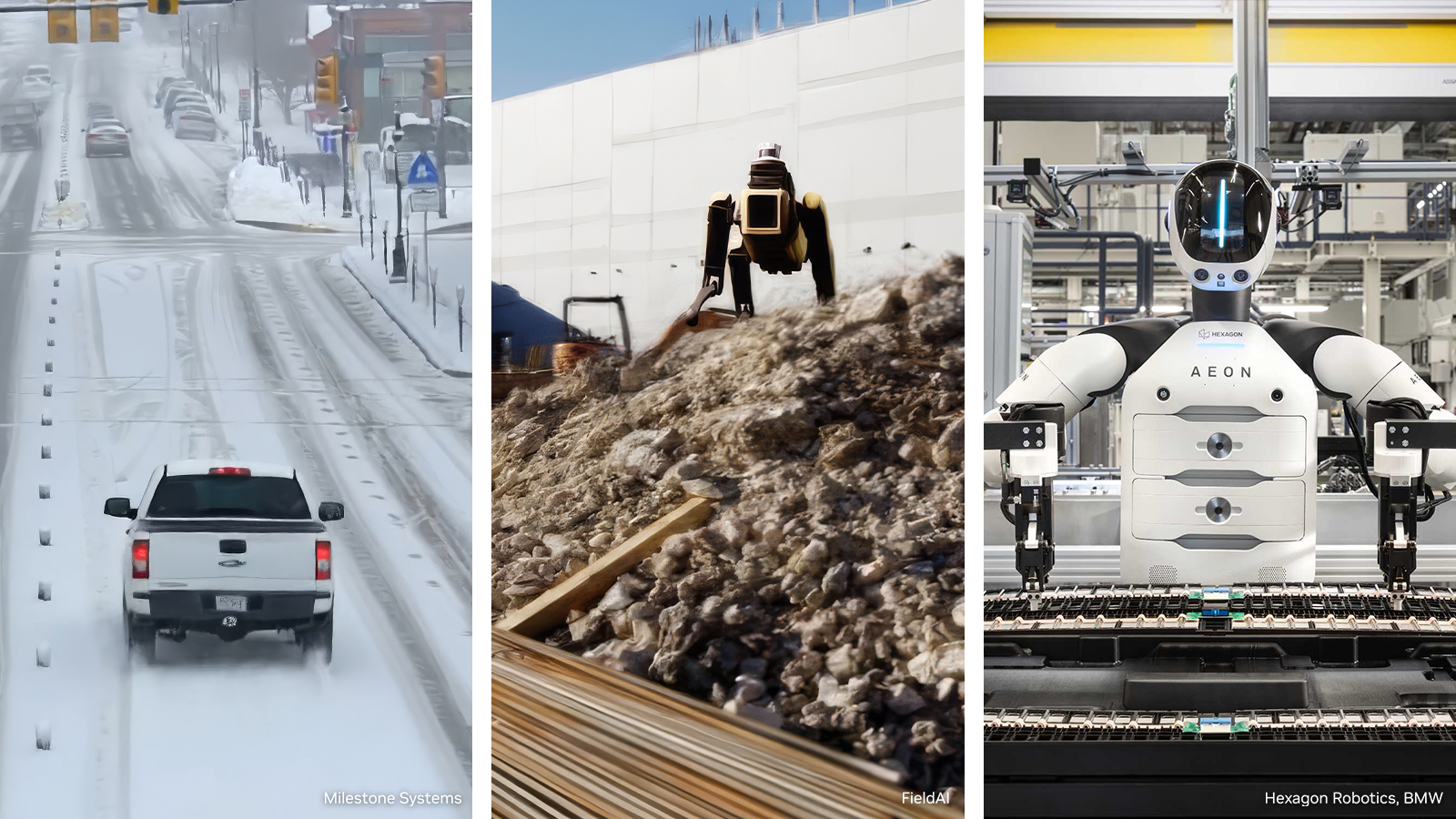

NVIDIA's March 16, 2026 GTC announcement tries to solve one of the hardest problems in physical AI: getting enough high-quality data to train systems that must operate safely in the real world. The company introduced the Physical AI Data Factory Blueprint as an open reference architecture that automates data generation, augmentation and evaluation for robotics, vision AI agents and autonomous vehicles.

The core pitch is that developers should be able to turn limited real-world data into much larger and more diverse training sets. NVIDIA said the blueprint uses Cosmos world foundation models and coding agents to create rare edge cases and long-tail scenarios that are expensive or impractical to capture directly. The workflow is split into Cosmos Curator for dataset processing and annotation, Cosmos Transfer for scaling and diversifying data, and Cosmos Evaluator, powered by Cosmos Reason, for automated scoring and filtering of generated samples.

NVIDIA is already using the blueprint to train and evaluate Alpamayo, which it describes as the first open reasoning-based vision language action models for long-tail autonomous driving. The company also said Skild AI is applying the system to general-purpose robot foundation models, while Uber is using it for autonomous vehicle development. That makes the announcement more than a tooling preview: NVIDIA is positioning the blueprint as the default data pipeline for embodied AI programs that need fast iteration.

Another notable detail is orchestration. NVIDIA said its open source OSMO framework now integrates with Claude Code, OpenAI Codex and Cursor so that coding agents can manage resources, resolve bottlenecks and accelerate model delivery across complex compute environments. On the infrastructure side, Microsoft Azure is integrating the blueprint into an open physical AI toolchain tied to Azure IoT Operations, Microsoft Fabric, Real-Time Intelligence and Microsoft Foundry. Nebius is integrating OSMO into its AI Cloud with RTX PRO 6000 Blackwell Server Edition GPUs, storage, labeling and managed inference services.

If NVIDIA delivers the GitHub release in April, the company could strengthen its role not just as a compute supplier but as the workflow layer for physical AI. The practical question for developers is whether a standard blueprint can meaningfully lower the cost and time required to reach production-quality datasets in robotics and autonomous systems.

Why it matters

- It targets the data bottleneck that slows down robotics and autonomous vehicle development.

- It links world models, evaluation tools and coding agents into one repeatable workflow.

- It extends NVIDIA's influence from chips and cloud infrastructure into the operating layer of physical AI pipelines.

Related Articles

NVIDIA and ABB Robotics are integrating Omniverse libraries into RobotStudio to bring industrial-grade physical AI simulation to factory automation. The companies say RobotStudio HyperReality reaches 99% sim-to-real accuracy and can cut deployment costs by up to 40%.

Why it matters: NVIDIA is aiming generative video research at simulation-ready 3D environments rather than short clips. The tweet says Lyra 2.0 maintains per-frame 3D geometry and uses self-augmented training, while the project page shows outputs as Gaussian splats and meshes that can be exported to Isaac Sim.

NVIDIA released Nemotron-Personas-Korea on Hugging Face with 7 million synthetic personas grounded in Korean public statistics. The dataset matters because agent localization is no longer only translation; it needs region, honorifics, occupations, and public-service context.

Comments (0)

No comments yet. Be the first to comment!