At GTC on March 16, 2026, NVIDIA announced Dynamo 1.0 as a production-grade open source inference stack for generative and agentic AI. NVIDIA says Dynamo can boost Blackwell inference performance by up to 7x while integrating with major frameworks and cloud providers.

#nvidia

RSS FeedAfter strong criticism of Starfield footage shown with Nvidia's DLSS 5, Bethesda says its art teams will keep adjusting the lighting and final look and that the feature will remain optional for players.

A March 15, 2026 Hacker News post about GreenBoost reached 124 points and 25 comments. The open-source Linux project combines a kernel module and CUDA shim to tier model memory across VRAM, DDR4, and NVMe so larger local LLMs can run without changing inference apps.

A March 18, 2026 Hacker News post about NVIDIA NemoClaw reached 231 points and 185 comments. The alpha project packages OpenClaw on top of NVIDIA OpenShell and Agent Toolkit to run always-on assistants inside sandboxed environments with policy controls and cloud-routed inference.

The latest DLSS 5 backlash has shifted from aesthetics to process after Insider Gaming reported that partner developers at studios including CAPCOM and Ubisoft said they learned about the tech at the same time as the public.

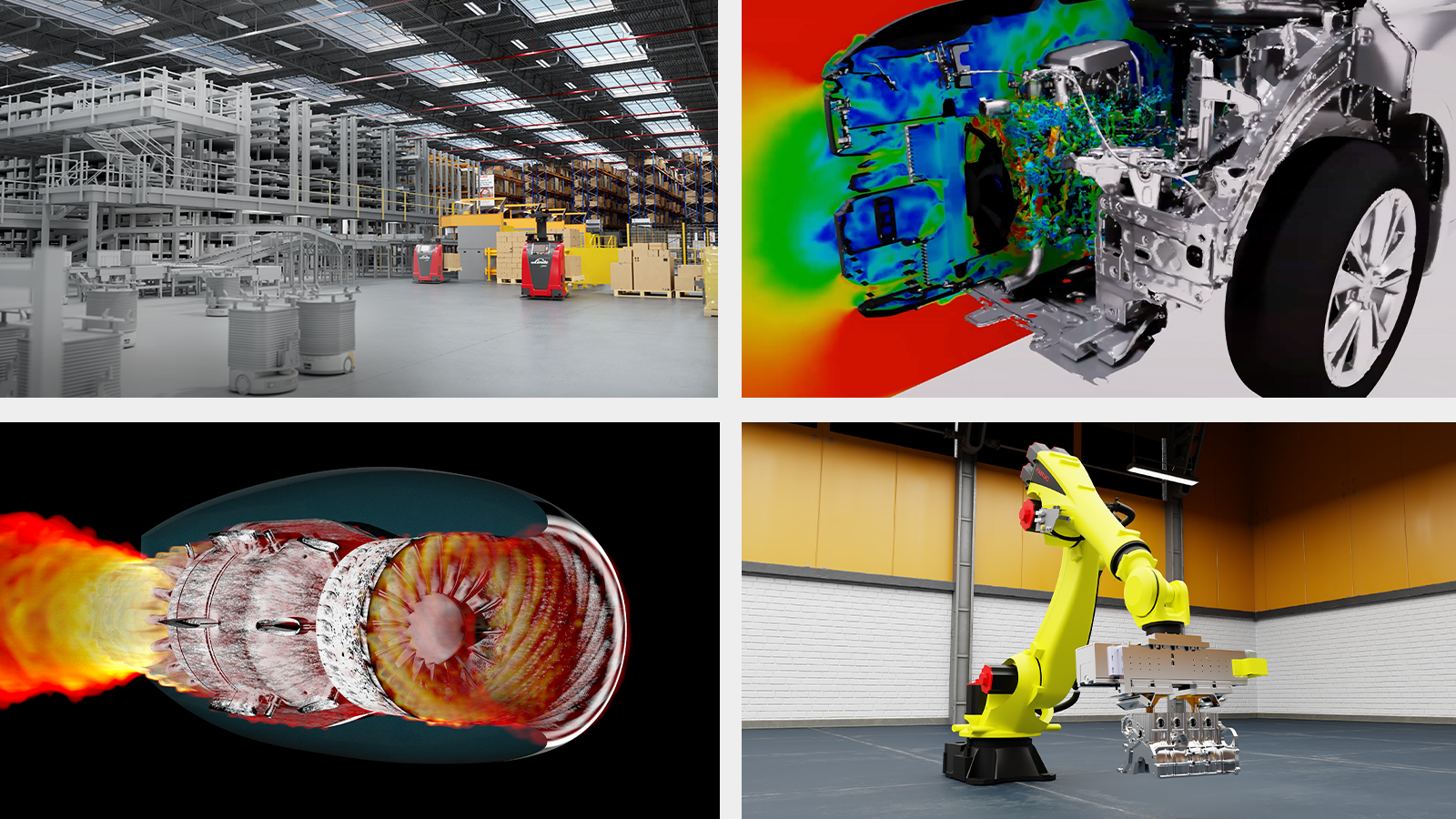

NVIDIA said on March 16, 2026 that Cadence, Dassault Systèmes, PTC, Siemens and Synopsys are bringing NVIDIA-powered AI agents and GPU-accelerated software into industrial workflows. The announcement spans chip design, automotive simulation, digital twins and manufacturing infrastructure across AWS, Google Cloud, Microsoft Azure, OCI and major OEM partners.

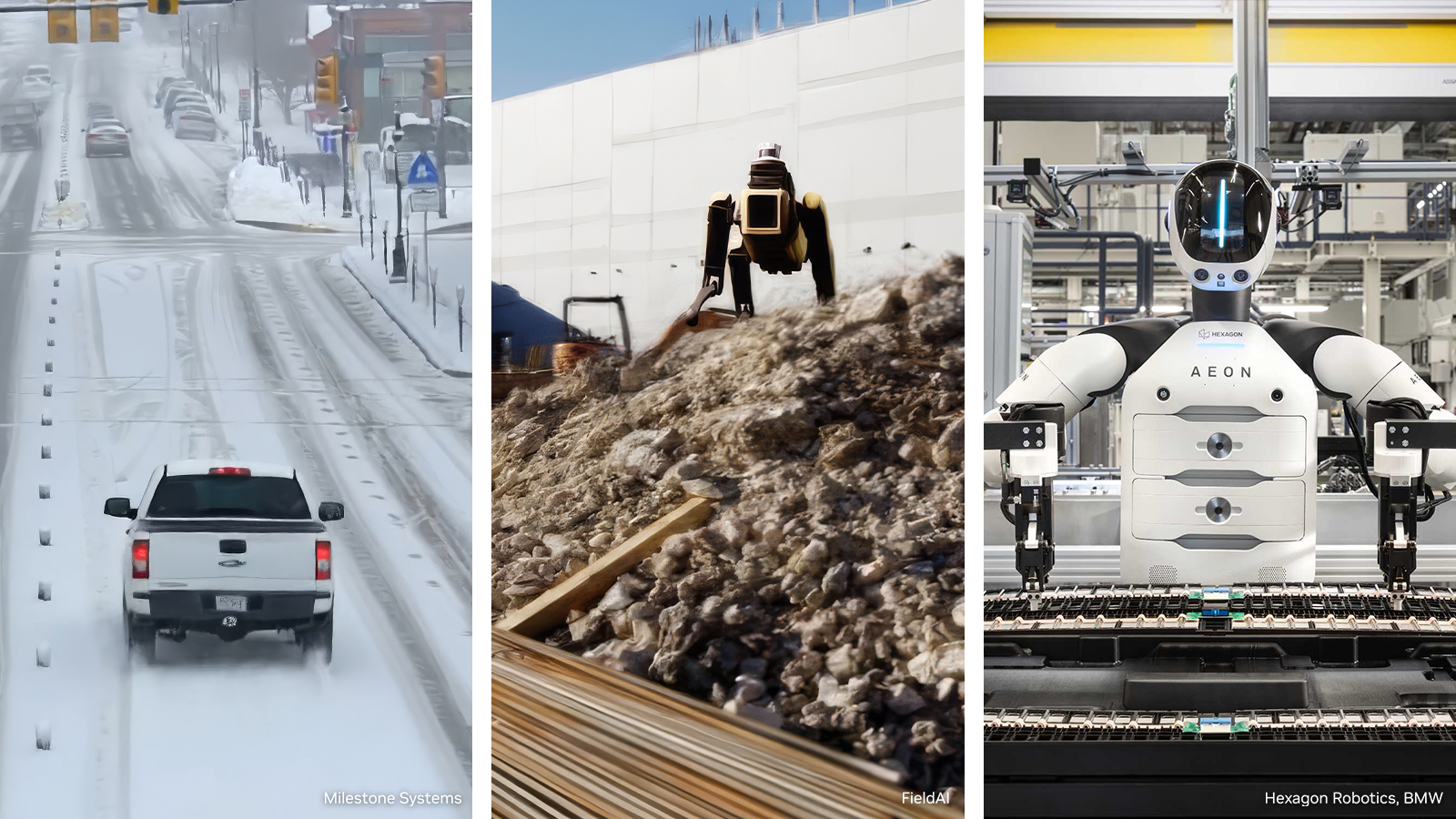

NVIDIA on March 16, 2026 introduced an open reference architecture for generating, augmenting and evaluating training data for robotics, vision AI agents and autonomous vehicles. Microsoft Azure and Nebius are integrating the blueprint, and NVIDIA said the package is expected to land on GitHub in April.

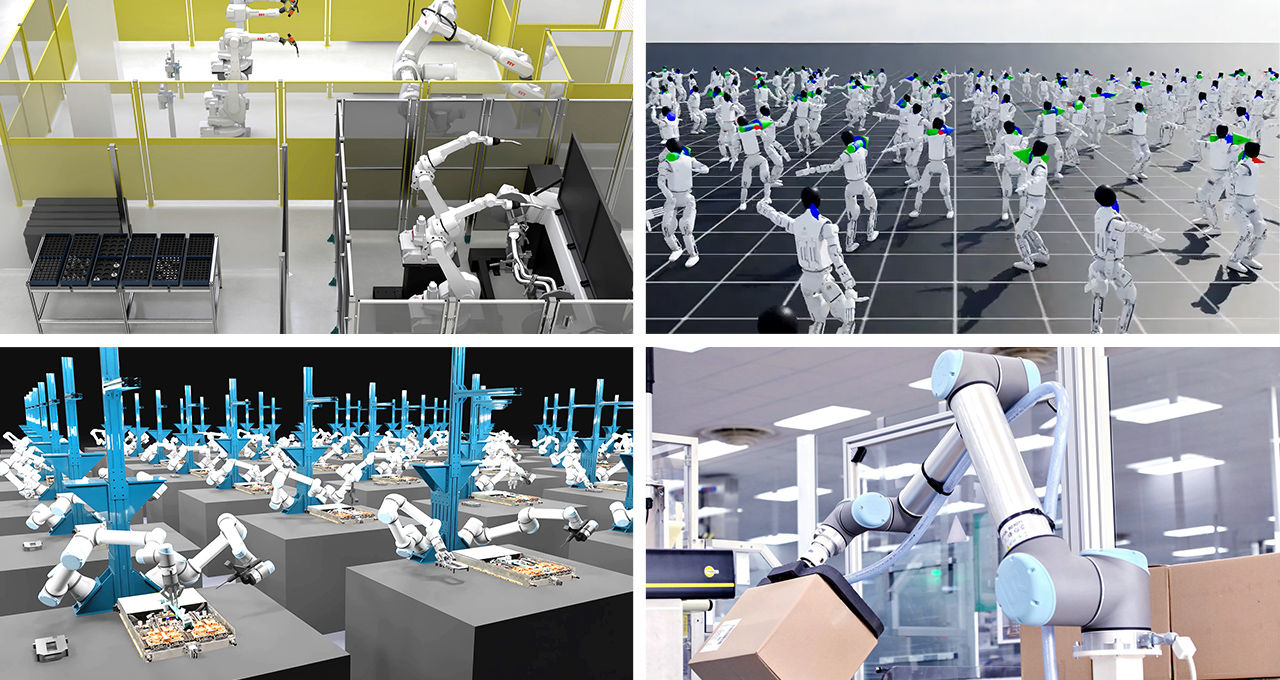

NVIDIA said on March 16, 2026 that ABB, FANUC, Figure, KUKA, Skild AI and other robotics players are building on Cosmos, Isaac and GR00T to move physical AI from simulation into production. The release also introduced Cosmos 3, Isaac Lab 3.0 in early access, and commercial early access for GR00T N1.7.

NVIDIA says Vera is the first processor built specifically for agentic AI and reinforcement learning. On Hacker News, the announcement reached 165 points and 98 comments as readers focused on CPU-GPU coupling, rack density, and the practical value of NVIDIA's efficiency claims.

OpenAI said it raised $110B at a $730B pre-money valuation and added Amazon and NVIDIA to a broader infrastructure push. The company tied the financing to fast growth across Codex, ChatGPT, and enterprise deployments.

NVIDIA on March 16, 2026 introduced its Physical AI Data Factory Blueprint, an open reference architecture for generating, augmenting, and evaluating training data for robotics, vision AI agents, and autonomous vehicles. The company says the stack combines Cosmos models, coding agents, and cloud infrastructure from partners such as Microsoft Azure and Nebius to lower the cost and time of physical AI training at scale.

NVIDIA said on March 16, 2026 that Dynamo 1.0 is entering production as open source software for generative and agentic inference at scale. The company says the stack can raise Blackwell inference performance by up to 7x and is already supported across major cloud providers, inference platforms, and AI-native companies.