NVIDIA Lyra 2.0 turns single images into explorable 3D worlds

Original: Today, we released Lyra 2.0, a framework for generating persistent, explorable 3D worlds at scale, from NVIDIA Research. Generating large-scale, complex environments is difficult for AI models. Current models often “forget” what spaces look like and lose track of movement over time, causing objects to shift, blur, or appear inconsistent. This prevents them from creating the reliable 3D environments required for downstream simulations. Lyra 2.0 solves these issues by: ✅ Maintaining per-frame 3D geometry to retrieve past frames and establish spatial correspondences ✅ Using self-augmented training to correct its own temporal drifting. Lyra 2.0 turns an image into a 3D world you can walk through, look back, and drop a robot into for real-time rendering, simulation, and immersive applications. ➡️ Learn more: https://research.nvidia.com/labs/sil/projects/lyra2/ 📄 Read the paper: https://arxiv.org/abs/2604.13036 View original →

What the tweet revealed

NVIDIA AI Developer wrote that “Lyra 2.0” is a framework for generating “persistent, explorable 3D worlds at scale”. The post says current AI models often forget what spaces look like and lose track of movement, causing objects to shift or blur. Lyra 2.0’s proposed fixes are per-frame 3D geometry for retrieving past frames and self-augmented training to correct temporal drifting.

The account is NVIDIA’s developer-facing AI channel, which regularly surfaces research code, model demos, and applied AI workflows. In this case the tweet links to a research page and paper, so the post is more than a visual demo. The project page frames Lyra 2.0 as a way to generate camera-controlled walkthrough videos and then lift them into 3D through feed-forward reconstruction techniques.

Why the technical detail matters

The key problem is consistency. Text-to-video and image-to-video systems can make plausible short motion, but long scene exploration requires the model to remember geometry when the camera turns, moves back, or revisits an area. NVIDIA says Lyra 2.0 addresses spatial forgetting with information routing across generated frames and addresses temporal drift with self-augmented training. The project page also shows conversion into 3D Gaussian splats and meshes.

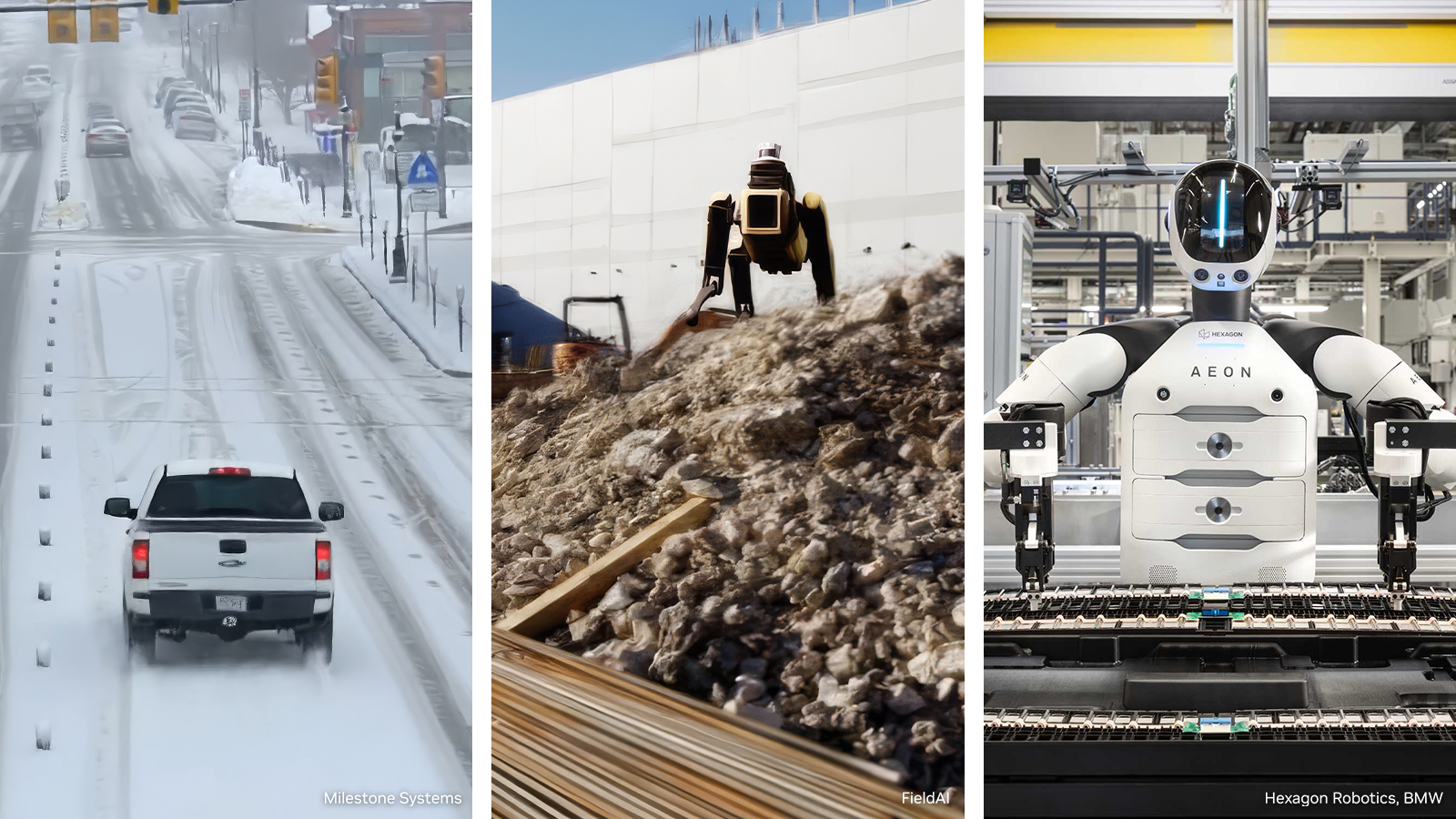

The robotics angle is the high-signal part. NVIDIA says generated scenes can be exported to physics engines, with examples in NVIDIA Isaac Sim for robot navigation and interaction. That points to a path from image-based world generation into embodied AI simulation, where the output needs to be spatially coherent enough for agents to move, perceive, and test policies.

What to watch next is whether NVIDIA releases enough code and evaluation detail for outside labs to stress-test consistency over long camera paths, and whether Isaac Sim users can turn Lyra scenes into useful synthetic training environments rather than attractive walkthroughs. Source: NVIDIA AI Developer X post · Lyra 2.0 project page · arXiv paper

Related Articles

NVIDIA on March 16, 2026 introduced an open reference architecture for generating, augmenting and evaluating training data for robotics, vision AI agents and autonomous vehicles. Microsoft Azure and Nebius are integrating the blueprint, and NVIDIA said the package is expected to land on GitHub in April.

A r/MachineLearning post and linked benchmark writeup argue that batched FP32 SGEMM on RTX 5090 is hitting an inefficient cuBLAS path, leaving much of the GPU idle.

Space data centers are still mostly future tense, but space inference is starting to look like a real business. Kepler’s in-orbit cluster already ties 40 Nvidia Orin processors across 10 satellites and has 18 customers, which is enough to move the idea out of pitch-deck territory.

Comments (0)

No comments yet. Be the first to comment!