Google is no longer treating AI memory as a niche add-on. By bringing Gemini Personal Intelligence to India, it is testing whether a model that reads Gmail, Photos, and watch history can become a daily assistant in one of its biggest markets.

#gemini

RSS Feed

On April 8, 2026, Gemini introduced notebooks as a new project layer for grouping past chats, files and instructions. Google says notebooks sync with NotebookLM and are rolling out first on the web for Google AI Ultra, Pro and Plus subscribers.

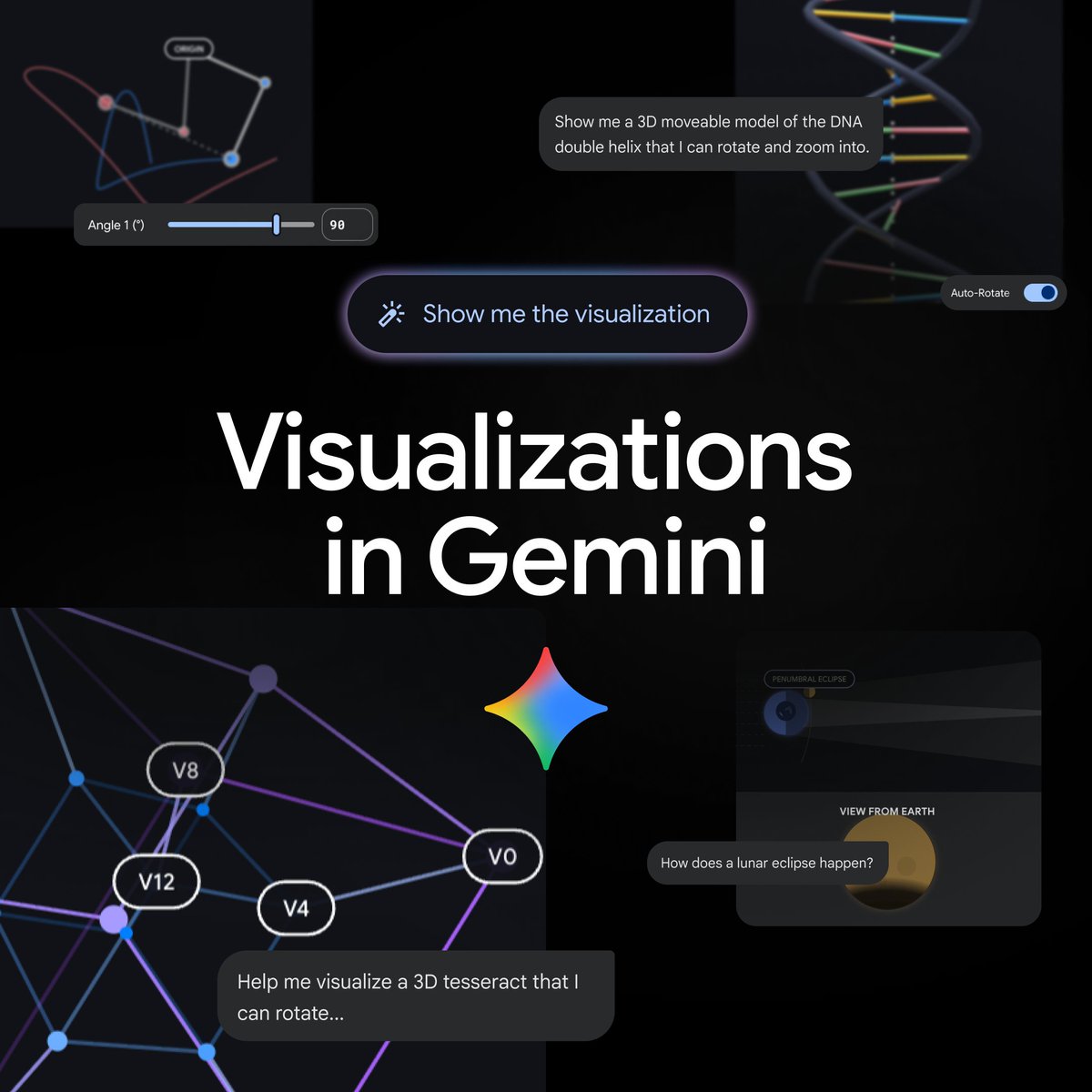

On April 9, 2026, Gemini said it can now generate interactive visualizations directly in chat. Google’s product page says the rollout adds functional simulations, adjustable parameters and 3D exploration to the Gemini app for global users on the Pro model.

Google introduced notebooks in Gemini on April 8, 2026, adding a shared workspace that syncs chats and source files with NotebookLM. The initial rollout starts on the web for Google AI Ultra, Pro, and Plus subscribers, with mobile, more European countries, and free users to follow.

Google said on March 27, 2026 that Google Translate's Live translate with headphones is now on iOS and expanding to more countries for both Android and iOS users. Google's official product pages say the feature supports 70+ languages, works with any pair of headphones, and builds on Gemini speech-to-speech translation designed to preserve tone, emphasis, and cadence.

Google on April 8 began rolling out Gemini for Home early access in Japan. The update moves Google Home from fixed commands toward conversational control, AI camera summaries, and natural-language video search.

Google said on March 26, 2026 that Search Live is expanding to every language and country where AI Mode is already available. The rollout reaches more than 200 countries and territories and uses Gemini 3.1 Flash Live to make search more conversational, voice-first, and camera-aware.

A Hacker News thread pushed a GitHub repo claiming it can detect and weaken Gemini image SynthID watermarks using signal processing alone. The more important debate was not the headline claim itself, but whether the project had been validated against Google's own detector and what that says about watermark-based provenance overall.

Google AI said on March 26, 2026 that Gemini 3.1 Flash Live is launching for developers building real-time voice and vision agents. Google highlighted faster natural dialogue, better task completion in noisy environments, and stronger complex-instruction following, while its Live API docs describe low-latency multimodal streaming with tool use and 70-language support.

Google DeepMind said on March 26, 2026 that Gemini 3.1 Flash Live is rolling out in Gemini Live and Google Search Live, while developers can access it through Google AI Studio. Google’s announcement positions 3.1 Flash Live as its highest-quality audio model, with lower latency, improved tonal understanding, and benchmark gains including 90.8% on ComplexFuncBench Audio.

Google expanded Search Live on March 26, 2026 to every language and location where AI Mode is available. The move pushes multimodal voice-and-camera search to more than 200 countries and territories and gives Gemini’s live audio stack a much larger real-world footprint.

Google DeepMind said on February 11, 2026 that Gemini Deep Think is being used on professional research problems across mathematics, physics, and computer science. The company highlighted its Aletheia math agent, up to 90% on IMO-ProofBench Advanced, and collaborations on 18 research problems as evidence that AI is moving from benchmark performance toward real scientific workflow support.