HN pushed this past 400 comments because the story was not just nostalgia. It asked what evidence of student thinking should look like when AI can produce the polished draft.

#education

RSS FeedHN upvoted MacMind because it shrinks transformer mystique to something inspectable: 1,216 parameters in HyperTalk on a Macintosh SE/30. The demo learns bit-reversal for FFT using embeddings, positional encoding, self-attention, backpropagation and gradient descent.

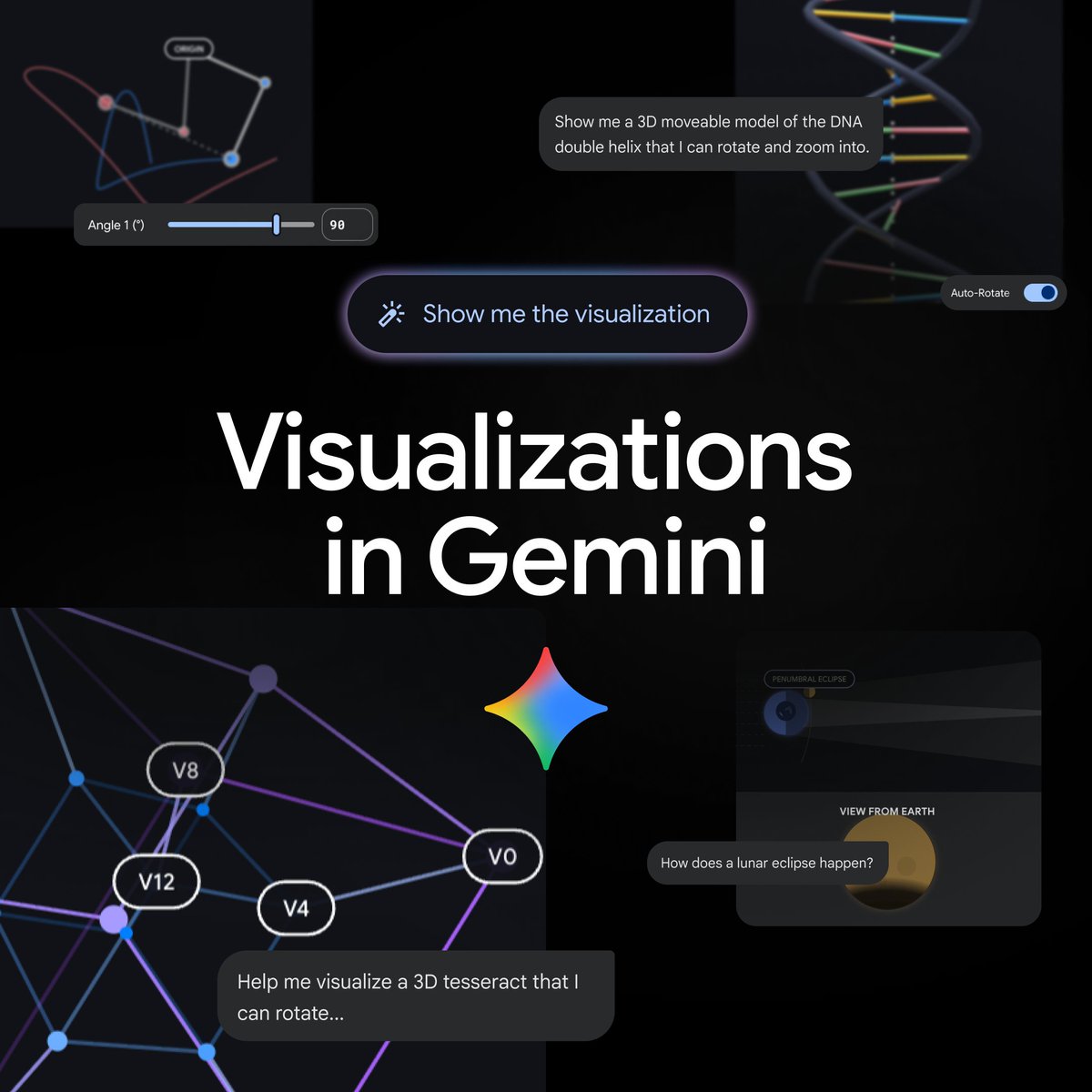

On April 9, 2026, Gemini said it can now generate interactive visualizations directly in chat. Google’s product page says the rollout adds functional simulations, adjustable parameters and 3D exploration to the Gemini app for global users on the Pro model.

A recent Show HN post highlighted GuppyLM, a tiny education-first language model trained on 60K synthetic conversations with a deliberately simple transformer stack. The project stands out because readers can inspect and run the whole pipeline in Colab or directly in the browser.

A Show HN thread highlighted GuppyLM, a tiny 8.7M-parameter transformer with a 60K synthetic conversation dataset and Colab notebooks. The point is not state-of-the-art performance, but making the full LLM pipeline inspectable from data generation to inference.

Stanford's public CS25 course is again operating as an open lecture stream for Transformer research, with Zoom access, recordings, and a community layer that extends beyond campus.

Anthropic and Rwanda say a new 3-year agreement will expand Claude into national health, education, and public-sector workflows. The deal combines deployment with training, API credits, and local capacity building rather than simple tool access alone.

A Reddit post in r/MachineLearning highlights a new MIT 2026 course on flow matching and diffusion models with lecture videos, mathematically self-contained notes, and coding exercises. The updated course expands into latent spaces, diffusion transformers, and discrete diffusion language models.

OpenAI Developers announced on March 20, 2026 that verified university students in the United States and Canada can claim $100 in Codex credits. OpenAI’s support page says that equals 2,500 ChatGPT credits, requires student verification through SheerID, and expires 12 months after the grant date.

OpenAI is rolling out dynamic visual explanations for more than 70 core math and science concepts in ChatGPT. The feature is available globally across all plans and is meant to turn formulas, variables, and graphs into interactive learning modules.

A Hacker News discussion of a Techdirt article argues that AI-detector-driven grading is pushing students to write worse, hide their process, and even use tools like GPTZero to optimize for 'human' scores.

OpenAI announced a new Learning Outcomes Measurement Suite focused on causal, evidence-based evaluation of AI in classrooms. The company says independent pilots in 2026 will span seven countries, more than 10,000 students, and 10 partner institutions.