Google DeepMind is pushing embodied reasoning closer to deployable robotics, not just lab demos. In the linked thread and blog post, Gemini Robotics-ER 1.6 reaches 93% on instrument reading with agentic vision and improves injury-risk detection in video by 10% over Gemini 3.0 Flash.

Humanoid Robots

RSS Feedr/singularity is less interested in the spectacle than in what 21 kilometres reveals about endurance, thermal limits, batteries, and autonomy. Euronews reports that more than 70 teams joined an overnight full-course test in Beijing E-Town ahead of the 2026-04-19 race, with around 40% now relying on fully autonomous navigation.

Generalist says GEN-1 crosses a commercial threshold for simple physical tasks by combining higher success rates, faster execution, and lower task-specific robot data requirements.

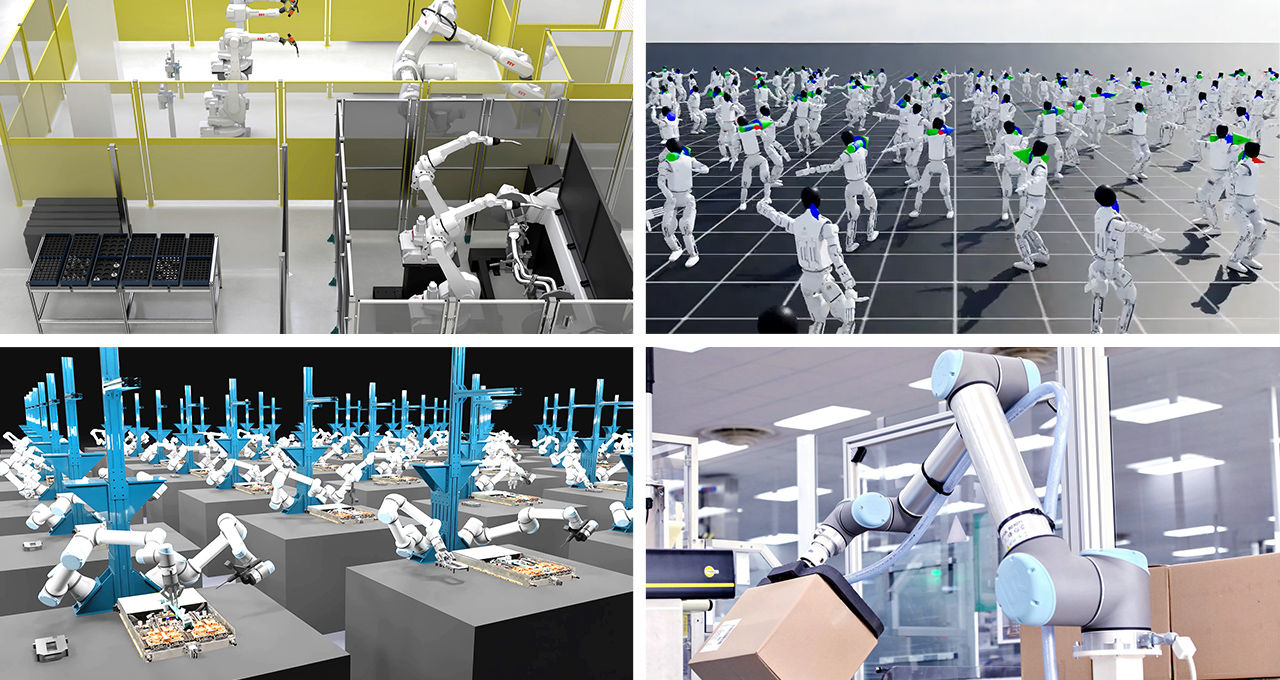

NVIDIA announced its Open Physical AI Data Factory Blueprint on March 16, 2026 to speed development for robotics, vision AI agents and autonomous vehicles. The blueprint is designed to turn limited real-world data into larger, more diverse training pipelines with synthetic generation and automated evaluation.

Google DeepMind and Agile Robots said on March 24, 2026 that they will combine Gemini Robotics foundation models with Agile Robots hardware to build adaptable, reasoning robots for industrial environments. The partnership starts with high-value manufacturing and automation use cases where scale and reliability matter most.

Google DeepMind introduced D4RT on January 22, 2026 as a unified model for dynamic 4D scene reconstruction and tracking. The company says it runs 18x to 300x faster than prior methods and is efficient enough for real-time applications in robotics and augmented reality.

NVIDIA said on March 12, 2026 that TensorRT Edge-LLM now supports MoE models, Nemotron 2 Nano, Qwen3-TTS/ASR, and Cosmos Reason 2 on Jetson and DRIVE platforms. The company is positioning the runtime as a low-latency edge reasoning layer for robotics and autonomous vehicles.

Hugging Face released LeRobot v0.5.0 on March 9, 2026 with first-class Unitree G1 humanoid support, new robot-learning policies, and a faster dataset pipeline. The release also adds Python 3.12+, Transformers v5, EnvHub, and NVIDIA IsaacLab-Arena integration.

NVIDIA said on March 20, 2026 that its Cosmos world foundation models have advanced again with Transfer 2.5, Predict 2.5, and Reason 2. The linked NVIDIA Technical Blog frames the update around higher-quality synthetic data, stronger long-tail scenario generation, and richer reasoning for robots and autonomous vehicles.

A March 16, 2026 r/artificial post linking a Popular Science report reached 590 points and 62 comments. The story says Niantic Spatial trained its Visual Positioning System on more than 30 billion Pokémon Go images and is now partnering with Coco Robotics so delivery robots can localize with centimeter-level precision in GPS-challenged streets.

A March 15, 2026 r/singularity post with 3,150 points and 376 comments pushed attention toward LATENT, a humanoid tennis system trained from five hours of imperfect human motion fragments instead of full match-grade capture.

NVIDIA said on March 16, 2026 that ABB, FANUC, Figure, KUKA, Skild AI and other robotics players are building on Cosmos, Isaac and GR00T to move physical AI from simulation into production. The release also introduced Cosmos 3, Isaac Lab 3.0 in early access, and commercial early access for GR00T N1.7.